Chapter 3: The impact of code-driven legal technologies

On this page

- 3.1 Introduction

- 3.2 Rules as Code

- 3.3 The texture of code-driven normativity

- 3.4 A spectrum of impact on law

- 3.5 Anticipating legal protection under code-driven law

- 3.6 Conclusion: treading the line

3.1 Introduction

For the purposes of this chapter, we adopt a definition of code-driven law as ‘legal norms or policies that have been articulated in computer code, either by a contracting party, law enforcement authorities, public administration or by a legislator.’1 More specifically, we focus on the latter two categories, given the increasing interest and speed of development in the ‘rules as code’ space and the tangible efforts of public administrations in adopting it for real-world application.2 This can be contrasted with so-called ‘cryptographic law’ based on blockchain applications, which despite huge interest (and hype) in recent years has nevertheless lost a great deal of public and scholarly attention in light of the ongoing collapse of initiatives based on cryptocurrency and non-fungible tokens (NFTs).3

Keeping the emphasis on the articulation of public legal norms in computer code, this chapter focuses primarily on ‘rules as code’ (RaC) as a subset of code-driven law. RaC initiatives are becoming very real, and the articulation in code of legal norms which lies at its heart speaks directly to the notion of computational law having a potential ‘effect on legal effect’.4 The position of our first Research Study is that attributed legal effect is a central mechanism by which law and the Rule of Law can provide protection, and so it is essential to enquire whether and how both the concepts of attribution and of legal effect might be impacted by the introduction of certain kinds of computation.

In the remainder of this chapter, we first consider what kinds of system and approach ‘rules as code’ refers to, gleaning some definitions from various prominent commentators. We then attempt to make sense of the RaC landscape by placing them on a spectrum of impact on law, identifying the potential issues raised by RaC systems as they progress further away from text-driven legality at one end of the spectrum towards code-driven legalism at the other. In the final section of the chapter, we take a step back from individual systems/approaches to consider what the COHUBICOL perspective can tell us more broadly about the implications of code-driven law for legal protection and the Rule of Law.

Some Rules as Code approaches have the potential to enhance legal protection and the Rule of Law, while others reflect an instrumental idea of what a legal rule is

Ultimately the analysis is nuanced: some Rules as Code approaches have the potential to enhance the practices that enable legal protection and the Rule of Law, while others reflect an instrumental idea of what a legal rule is and how it should be treated. The latter demonstrate a legalistic conception and application of the law, which is at odds with the idea of legality, whereby law is not just about rules, but also – and crucially – about affording spaces and procedures which allow for the interpretation, deliberation, and contestation of what those rules ought to mean in particular circumstances. Those affordances, which are readily supported by text-driven ‘infrastructure’ of law-as-we-know-it, both give law its capacity to provide protection, and give democratic legitimacy to the processes by which legal rules are produced. The interplay between RaC approaches and existing processes of government is complex and multifaceted. The hope in this chapter is to highlight where the introduction of computational methods can enhance the specifically and properly legal character of legislative rules (with all the procedural and interpretative connotations that ought to come with that term), while at the same time avoiding the reductive casting of legal rules as no more than technical or commercial instructions for compliance.

3.2 Rules as Code

There is no settled consensus on what precisely RaC is, or what it should seek to be. This perhaps reflects the fact that people from a variety of professional backgrounds are expressing an interest in its development and use, and so bring a range of ideas about what it is and what it ought to be and do. In that sense we hope this Research Study, along with the prior Research Study on Text-Driven Law,5 might contribute something to the normative development of its scope and aims.

As Mohun and Roberts put it in the OECD’s working paper ‘Cracking the Code: Rulemaking for humans and machines’, ‘RaC envisions a fundamental transformation of the rulemaking process itself and of the application, interpretation, review and revision of the rules it generates.’6 They adopt de Sousa’s definition of RaC as ‘the process of drafting rules in legislation, regulation, and policy in machine-consumable languages (code) so they can be read and used by computers.’7

Kelly suggests that, ‘at its most basic’,

[RaC] is a granular agile project management methodology focussed on

- Creating a transparent algorithmic law representation, centred on decision tree diagramming and structured languages;

- Secure, cloud-based production platforms, allowing iteration, testing, access, and maintenance.8

Waddington highlights a variety of approaches that come under the RaC umbrella. He suggests that the approach is

not wedded to any … technology solutions so much as to the idea that the “coding” (or mark-up) of the legislation should be widely usable, traceable to the legislation, rather than adding material to reflect assumptions about procedures or implementation.9

He makes a nuanced normative argument about what RaC should (and should not) seek to achieve:

what if, hype aside, Rules as Code is not really intended to be magically transformative, and is not really about automating legal decisions or about programs that implement law themselves? … [Rules as Code] is about applying human intelligence, rather than AI, and about the less glamorous ways in which computers are already handling law and could do better in aiding humans.10

This is echoed in visions for RaC that are as much about the process of developing rules as the means by which they are represented, processed and enforced. Casanovas, for example, suggests that RaC is

not a new technology (there is no way to present it as such) but an attitude that includes technological and political planning for policy making and a clear will to cope with the demands of the digital age.11

In this context of policy development, de Sousa and Andrews suggest that by making laws ‘machine consumable, we can take a whole new approach to testing them, and modelling different legislative approaches’.12 To achieve this will require new ways of drafting legislation that mean we ‘draft the code and the human-readable text at the same time, and allow them to influence each other’.13

The impact of RaC will depend very much on who is afforded what uses by the system, and at what stage of the ‘lifecycle’ of a legal norm

Once the policy has been developed and the RaC translations created (whether directly by the legislature/executive, or subsequently by third parties), the focus can shift to enforcement, compliance and the provision of legal advice. Extensible platforms such as DataLex can ‘be used to develop legal reasoning applications in areas such as legal advisory services, regulatory compliance, decision support and Rules as Code’.14 ‘Low-code’ and ‘no-code’ platforms like Blawx and Neota enable the declaration of rules using intuitive graphical user interfaces that hide the logical rule engines beneath.15 Domain experts who might not have experience with logic or general-purpose programming can thus be closely involved in the process of defining rules.16

The impact of RaC will depend very much on who is afforded what uses by the system, and at what stage of the ‘lifecycle’ of a legal norm. The ‘who’ can be anyone from legislative drafters to public administrators to commercial enterprises to citizens to judges. The point of application can be anything from point of initial conception to the developing and passing of a norm into law to the interpretation of its terms by those subject to them on to the authoritative determination of its meaning by a court (if that ever happens) – as well as many points in between.

3.2.1 The COHUBICOL lens

Taking a step back is part of COHUBICOL’s approach: attempting to tease out the implicit and explicit assumptions that are reflected in the design of legal technologies and the contexts where they are intended to be deployed. It is essential to properly delineate the ways in which humans (both citizen ‘users’ and legal practitioners, of all kinds) might be aided by technologies like RaC, to ensure that core legal values can be preserved and, where possible, enhanced by the appropriate use of such technology. In that light, some initial observations are worth making here, to frame the analysis later in the chapter.

3.2.1.1 Legality and legalism

Casting law as ‘regulation’, and citizens as ‘consumers of rules’ or ‘rule-takers’, risks adopting a reductive and technocratic view of legal norms as merely instruments of policy, and citizens and other legal subjects as passive targets whose duty is simply to comply. This flattens the relationships that properly constitute legality, as opposed to legalism, in which there is a ‘reciprocity of expectations’ between legislator and citizen.17 Legality is about the reciprocal rule of law, where citizens have a role (however distant) in the making of rules and their subsequent application, whereas legalism is about a top-down rule by law, where laws are commands of a sovereign that we must simply follow.18 In any given instance a failure to perfectly uphold this reciprocity might not matter too much to those involved: perfect legality is an aspiration, and unlikely to be attained in every case (if ever). But in aggregate, the failure to reflect its ethos could threaten the social, civic and professional ‘anchoring practices’ of ‘interpretation, justification, contestation and creative action’ that both give rules their legal character and enable the law to afford protection.19

These practices are forever in tension, requiring constant reinvigoration for law to have legitimacy and to be effective in structuring societal relations.20 When the (technological) conditions that enable those practices change, the question that must be asked is whether or and how the practices themselves will change, and possibly falter, perhaps in unforeseen or non-obvious ways. That is the essential question the COHUBICOL project seeks to grapple with.

From that perspective, it is essential not to assume any determinism in the role played by technologies involved in the law: we cannot presume that they will have only beneficial or only negative effects, if even we can assume their introduction will have some kind of impact.21 Instead, to properly identify what that impact is or will be, we need to anticipate (i) who will be afforded what capacities by the technologies, and in what circumstances, (ii) which existing affordances will be changed or removed, and (iii) what impact might they have on the conceptual underpinnings of the law and its particular ‘mode of existence’ (these three elements are of course deeply intertwined).22 Ultimately the hope is that such technologies can be embraced where they facilitate and strengthen the specific type of protection that law affords, and resisted where they promote interests or centres of power that could or would otherwise have been constrained by the Rule of Law.

Casting law as ‘regulation’, and citizens as ‘consumers of rules’ or ‘rule-takers’, risks adopting a reductive and technocratic view of legal norms as merely instruments of policy, and citizens and other legal subjects as passive targets whose duty is simply to comply.

3.3 The texture of code-driven normativity

We mentioned above the idea that one way to make sense of the RaC landscape is to consider the spectrum of its potential impact on the law. More specifically, we want to tease out the difference between what a legal rule does and what a code rule does, and where particular approaches to RaC sit in relation to those two very different types of ‘normativity’ (the ways in which behaviour, action and practice are shaped through inducement, enforcement, inhibition or prohibition23).

In the first Research Study, we considered the texture or fabric of text-driven normativity from various angles, trying to identify its qualities and the conditions that makes it possible. One task in this chapter is to attempt to do something similar for code-driven law (specifically RaC).

Different technologies exert different amounts of ‘normative force’, from suggesting behaviour and action, through to guiding them in ways that can be resisted, on to defining their character and limits from the outset, with no possibility of reconfiguration or resistance.24 From a Science, Technology and Society studies perspective this is true of all technologies; they inevitably shape the practices they are embedded within. This shaping is often imperceptible and can be a constitutive part of the practice, sometimes in ways that are not obvious even to those engaged in the practice.25 Text is perhaps an example of such a technology.26 Despite it playing a fundamental role in shaping the nature of legal rules and the character of their application, this fact can be easily missed because it is so familiar to us.27

Crucially, the normative force of technology operates both through the technology in which the rules are embedded, be that text or code, and on the people involved throughout the lifecycle of a rule – from drafters and policymakers to public administrators, compliance officers, citizens, litigants and judges. While it might be tempting to think of legal rules (and indeed any rule) as cleanly logical and susceptible to application free of external influence, their interpretation and the technological means by which they are produced, accessed and enforced all have an impact on their operation in the real world.

Normative impact flows not only from the RaC-modelled rules themselves, but also the technologies used to produce, disseminate and enforce them.

It therefore follows that, when it comes to RaC, normative impact flows not only from the RaC-modelled rules themselves (in whatever specific form they take), but also the technologies used to produce, disseminate and enforce them.28 With respect to production, we can think about the impact of how RaC technologies represent or facilitate foundational concepts of law, for example rights, duties, legal personhood, legal effect and justice.29 With respect to implementation or enforcement, on the other hand, we can consider how RaC affects the interpretative and adjudicative processes of law. In either case, the potential impacts will be different for different actors within the legal system, whose interests and roles are essential to consider when thinking about what it is that legal rules are meant to do, and how.

Text-driven law has a very specific type of normativity. It is a common misconception that rules written in natural language are frustrated commands that would self-enforce if only we could find a way to make that happen. If indeed that were the goal, then the wholesale formalisation and automation of law would make perfect sense, and there would be no need for natural language in law. That this has not happened, despite a huge number of attempts to achieve it, draws attention to the fact that natural language plays a much larger role than simply articulating the terms of the rule. Legal rules are only effective in context, and for them to have any value in structuring society they must be interpreted at point of application, in light of that context.30 Legal rules are also given legal effect in the knowledge that they cannot determine for themselves in advance precisely how they will be understood, or what their meaning will be over time as societal mores and priorities change.31 These are features of natural language that are constitutive of law; they are not bugs to be solved.

Natural language plays a much larger role than simply articulating the terms of the rule.

If text-driven law affords this specifically legal form of normativity, what kind of normativity might code produce, or exert? This distinction – between code and legal normativity – is of fundamental importance to legal practice, because of the structural implications that flow from each type of rule.32 In stark contrast to natural language rules, rules represented in self-executing code will change the state of the world without the need for the presence or oversight of a human to interpret anything prior to its execution.33

3.3.1 Mixing legal and technological normativity

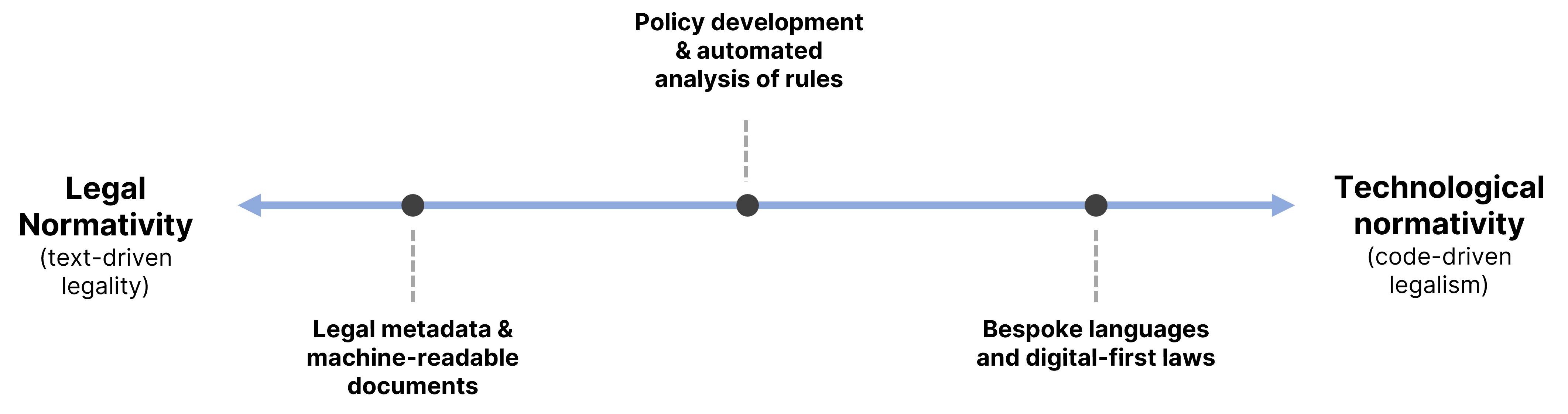

Between these two starkly contrasted ideas of rules, textual and computational, there are many different ways in which digital technologies (including RaC approaches) can have an impact on law. The question for the purposes of this chapter is whether they tend towards supporting a legal idea of a rule, or something else. To make some sense of this, we can think of a spectrum, with notionally ‘pure’ legal normativity at one end, and notionally ‘pure’ technological normativity at the other:

Figure 1. A spectrum of RaC normativity

Figure 1. A spectrum of RaC normativity

Depending on the type of RaC and where it sits on this spectrum, it will to a greater or lesser degree mix the two types of normativity in one medium. Where the text of the law is embedded unchanged in a digital technology, the anchoring practices of interpretation, deliberation and adjudication that are afforded by text-driven law may well be preserved. But even where that is the case, the system will necessarily include some measure of technological normativity, because it will to some degree structure the behaviour and actions of those who use it, simply by existing. The extent of this will depend on the RaC approach in question, and where and how it is deployed. It might exert normative force directly (e.g. via the user interface, structuring interactions with the system and framing its output34), or indirectly, when the code-driven translations it embeds mediate the meaning of natural language rules (e.g. where a benefits calculator provides an output that is treated as if it is legally accurate, even if this is not true).35 In many cases it will do both.

Depending on whether the RaC system is used in the development and articulation of legal norms ex ante, or in the provision of advice and/or automated compliance and enforcement ex post, the mixture of the two types of normativity will have different effects, with the technological force subsuming a lesser or greater part of what would previously have been text-driven legal ‘force’.

For example, the technological normativity embedded in the design of an application for drafting legislation might have some influence on the legal normativity – the natural language rules – it is designed to assist the writing of. Similarly, the particular way the interface of a legal expert system requests answers from the citizen will frame their understanding of the system and their interactions with it. Again, the legal rules are mediated through the assumptions made by the designers of the system about what kinds of question to ask, and even how to design the question-and-answer interactions. Because those assumptions mediate the experience (one might even say ‘user experience’, or ‘citizen experience’) of the law in ways that are not neutral,36 it is crucially important to consider whether or not they reflect Rule of Law values, and indeed democratic values, not least equality of access.37 There can be no doubt that natural language legal texts are often obscure, complex, require expertise to understand, and can be expensive to access, and that digital technology has an important role to play in solving these significant problems.38 But there is at the very least a risk that we create more long-term structural issues than we solve if we attempt to address those grave challenges by replacing the fundamentally democratic technology of text as the essential foundation of law.

A central concern of COHUBICOL is what might happen when legal and technological normativity are combined in a single system, with legal force being mediated by or even converted into technological force. This is a spectrum of technological impact, and the picture painted above is at the extreme end where legal normativity is entirely supplanted by technological normativity. As we shall see below, that is not the goal of the vast majority of RaC approaches or their creators, although it is a vision that has been mooted by some.39 The hope here is to highlight some of the problems that might arise if we go too far down that road.

Having made this brief initial foray into legal theory, which we will return to at various points below, we can turn to consider the spectrum of impact on law.

3.4 A spectrum of impact on law

In this section, we look at some primary classes of RaC approach, each representing a particular point of equilibrium, or disequilibrium, between legal and technological normativity. As we traverse the spectrum, the potential for more foundational impact increases:

-

RaC is used to provide added information to natural language legal documents, to enhance their usefulness in terms of legal search, archiving, and knowledge management.

-

RaC approaches are used as a tool to augment policy development and enhance the development of text-driven natural language rules;

-

RaC translations are made available for third parties using RaC to implement legal norms directly in their own systems in order to achieve ‘compliance’;

-

The executive provides RaC translations of natural language legal rules that it uses in service delivery;

-

The legislature promulgates digital-first RaC rules that are taken to be law and enforced automatically.

In considering these approaches and their potential impacts, we deliberately maintain an internal perspective on what law is and is for, as set out in depth in the first Research Study on Text-driven Law.40 This means that we do not dive into the technical specifics of these RaC systems to assess how they perform on discrete computer science tasks developed according to the internal perspective of that discipline. In many cases those tasks have no relationship to the goals and purposes of the law, and the notion of performance that is considered bears little relationship to legal protection and the Rule of Law.41 Instead, we hope to highlight those claims that are particularly salient in terms of the kind of critical appraisal the COHUBICOL project aims to foster.42 This means considering questions such as: What do RaC approaches afford (and disafford), and to whom? How do they interface with or change the mode of existence of law? And what impact do they have on the capacity of the law to provide protection?

3.4.1 Stage 1: Legal metadata and machine-readable documents

We saw above that the threshold between technological normativity and legal normativity will vary according to (i) the design of a system, and (ii) the extent to which we treat its output as having legal effect. The bigger the role that technological normativity plays, the further along the spectrum the system will sit – with the potential for a simultaneous diminution in the role played by legal normativity.

At the least contentious end of the normative spectrum are RaC approaches that provide mechanisms to tag or ‘mark-up’ the structure and elements of legal documents, and in particular legislation. Such approaches are closely connected with the vision of the ‘Semantic Web’, where metadata is added to documents to provide additional information on what they contain, which in turn allows them to be processed by computers in ways more relevant to their domain of use. To give an example, the elements of a legislative document can be tagged to specify their structure: recitals, chapters, parts, sections, paragraphs and articles are specified as such, rather than left as blobs of text that can only be processed without reference to their meaning within the relevant domain (crucially, this structure is different from the visual structure that can be achieved in an ordinary word processor using headings, indentation and numbered lists; the structured tagging referred to here is usually stored internally within the document’s file and is invisible to the reader).

3.4.1.1 Structural markup

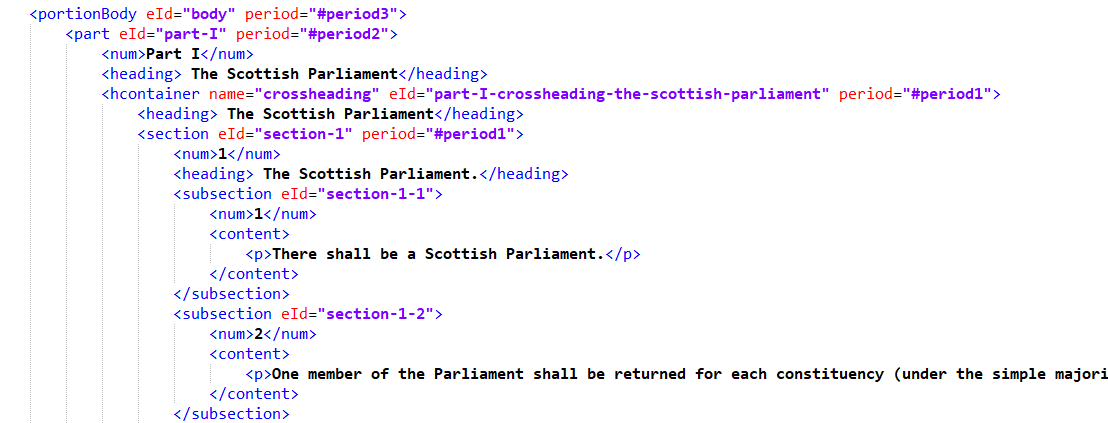

One of the primary RaC technologies used for this purpose is Akoma Ntoso, an example of an eXtensible Markup Language (XML). Akoma Ntoso has been standardised, and as such is freely usable by anyone – and indeed it forms the backbone of numerous legal drafting, publishing and archiving initiatives.43

|  |

Figure 2. Akoma Ntoso markup (left) and generated HTML (right)

The left part of Figure 1 shows the markup, or tagging, within an Akoma Ntoso (AKN) version of section 1 of the Scotland Act 1998. The substantive legal text is shown in black, while the tags are shown in blue, with attributes in red. The tags specify the various elements of the Act, including its Parts, headings, sections, subsections and their numbering. From this source document further versions can be generated for human readers. The right of Figure 1 shows a web page (HTML) version of the same section, generated from the same source, with identical binding text.44

At this end of the normative spectrum, the affordances of RaC are perhaps unglamorous but nevertheless extremely useful. The fundamental structure provided by markup languages like Akoma Ntoso in turn creates a foundation which can be used to enhance other software systems that lawyers and citizens frequently rely on.45 For example, legal search systems can utilise the structure of AKN documents to facilitate more accurate and targeted search (for example by restricting results to those provisions tagged as recitals, or pinpointing a specific individual section of an enactment). Because the document structure is explicitly specified by the creator of the tagged document, who will usually be the legislative drafter, there is less reliance on fuzzy searches that treat the content of a document essentially as a bag of words with no structure.46 Although such search approaches have improved dramatically, the capacity to target with certainty and isolate a specific part or parts of a document and to access metadata about them is a powerfully generative affordance of this kind of RaC system. It has allowed legal archival and database systems to provide more granular access to the text of the law, and to track its evolution and status over time as it comes into force and is amended or repealed. Metadata about if and when a specific enactment has come into force allows for ‘point-in-time’ displays of which parts of a legislative instrument have legal effect at a given moment. The structured documents also allow for cross-references between provisions to be identified, enabling more comprehensive understanding of the interconnected legal effects of legislative provisions.

One prominent example implementing these affordances is the UK’s online public database of legislation, legislation.gov.uk, which provides machine-readable versions of most primary and secondary legislation.47 The provision of a combination of (i) authoritative legal text and (ii) a structured machine-readable format means that third parties can build applications with granular access to the official source of legal text via API.48

3.4.1.2 Drafting software

One example of a class of application that builds upon the foundation provided by structured markup is the specialist software used by parliamentary counsel to draft legislation. Two prominent examples of these ‘integrated development environments for legislation’ include Legislation Editing Open Software (LEOS),49 developed by the European Commission, and LawMaker,50 developed by the National Archives and used in the UK. Precise functionality varies, but these systems have replaced the use of word processors for drafting legislation, which required complex and unreliable template files and had limited capacity for integration with other systems or for collaborative working.51 At its core, legislative drafting software produces documents directly within a structured format such as Akoma Ntoso (instead of in a generic word processor or Web format). As new elements of piece of legislation are drafted – articles, sections, subsections, paragraphs, etc. – they are immediately tagged with the relevant structural elements. This is usually done ‘in the background’, such that the drafter sees only the formatted natural language document.

As more legislation is produced directly in a structured format using such specialist software, the requirement to convert a traditional unstructured document is removed, which in turn minimises the likelihood of errors being introduced during conversion. On top of this core difference with traditional processes of creating and digitising legislation, these drafting systems are designed specifically to facilitate various aspects of the specialist work that legislative drafters do. This includes, for example, defining document structure to limit the potential for mistakes, facilitating collaborative editing across teams, tracking document versions, interlinking with legal databases for inserting/checking cross-references, allowing drafts of documents to be shared, and modularising common elements of the legislative workflow.52

3.4.1.3 Potential impact(s) of code-driven law

One can appreciate that by including additional metadata within the machine-readable structure of a document, approaches such as Akoma Ntoso can afford a deeper understanding of a particular piece of law which in turn might have a bearing on its legal interpretation. At the same time, there is in principle no effect on the natural language legislative document, as was shown on the right of Figure 2 – all things being equal, access to the law is not affected, nor are the traditional appearance or visual structure of the legislation. Machine-readable structured legal documents have the same basic interpretative affordances as do word processor or PDF versions of the same document.

3.4.1.3.1 Representing legal meaning and structure

The normative impact on law-as-we-know-it of providing basic machine-readable tagging of structure within legal documents appears minimal, provided what is tagged does not purport to provide the legal meaning of the elements of the document, but rather (and only) its unambiguous structure and the metadata required to capture it. This is fundamental, because to attempt to schematise the meaning of legal norms in an unambiguous and universally-accepted way is to elide one of their core affordances: the capacity to disagree about what they ought to mean. Attempting to codify the substantive meaning of legal norms is to do the job of the law before it gets the chance.

This concern is less acute with respect to the structure of legal norms or, even less problematically, the structure of the documents that contain their text. It is rarer for parties to disagree about whether a piece of text qualifies as a ‘section’ or ‘article’ of an enactment than it is for them to argue about what that section or article ought to mean – the latter type of argument is of course the bread and butter of litigation.53 But potentially problematic is the inclusion of metadata about, for example, the moment at which a provision came into force.54 If one hopes to rely on point-in-time snapshots of the state of the law, these need to be accurate to avoid potentially significant legal consequences. Dates and computers are not always good bedfellows,55 however, and even within text-driven law the question of how to specify them consistently is not without complexity.56 The consequences of inaccuracy could be significant, and a novel regime of liability might be necessary to account for them.57

If it is possible for such technical issues to be reliably overcome, and tagging of the text is limited to structural elements of legislation that are already recognised by the law, then the fundamentals of legal effect would seem to be unaffected. The mode of existence of legal norms is unchanged; legal rules are posited in natural language, they are produced by performative speech acts whose validity is governed by positive law (itself the product of the same essential process), and they become institutional facts within the legal-institutional order. The medium by which the legal texts are made available simply augments those texts with further information that may be contextually and legally relevant to their application in the real world, without affecting the capacity and methods of interpreting them. The capacity of the law to afford protection, built on the foundational capacity of text to afford multi-interpretation – and thus contest, stability, and geographical and temporal reach – are unchanged.

3.4.1.3.2 Extending the scope of legal protection

When systems are built on top of this foundation of structured documents, there is greater potential for normative impact on law and on legal effect. We saw above how searching and archival practices are extended by this type of RaC system, and how the affordances of this kind of RaC create new possibilities for legislative drafting practice. Improving the capacity to search, categorise and reference legal materials ought in principle to benefit users of the law. Affording access to the ‘raw material’ of law is a fundamental prerequisite of the capacity of citizens and their legal agents to develop novel arguments that are legally valid, a capacity that underpins legal protection.58 This is particularly true under the conditions of contemporary law, the volume and complexity of which make it difficult if not impossible for the citizen (let alone practitioner) to make sense of all the rules applicable in a given situation. The types of computational assistance afforded by this kind of RaC system may be not just desirable, but may be necessary, if the Rule of Law is to have genuine purchase in the contemporary world.59

Structured legal documents afford more than unstructured legal texts and the older methods of legal search built around them.

In that respect, then, structured legal documents afford more than unstructured legal texts and the older methods of legal search built around them. Structured documents extend what is already possible with natural language legal norms and facilitate the creation of systems that can increase and improve access to legal materials – though much will depend on the assumptions made in the design of those systems, in terms of how concepts such as relevance are handled. In principle, then, technologies at this point on the normative spectrum are an opportunity to strengthen the capacity of the law to provide protection, by facilitating more creative, forceful, or precise argumentation by reference to a wider range of relevant legal materials, the relationships between the provisions they contain, and the metadata that pertains to their status (such as validity and enforcement).

3.4.2 Stage 2: policy development and automated analysis of rules

The next stage on the spectrum of RaC normativity moves beyond the marking up of elements in the document to formalise additional information about the logic of those elements. The central motivating idea is that at a certain level legal rules can be abstracted into syllogisms: logical ‘if this, then that’ statements where conclusions flow deductively from certain premisses. The goal is to represent that essential logic.60

At the point of drafting legislation, logical representation can complement the structural markup at Stage 1 of the normative spectrum. At this stage, the goal is for computation to begin to interact with the meaning of the rules, or at least with how their meaning is likely to be interpreted once they are given legal effect.61 At Stage 1, the tagging allows for computational tools to manipulate the metadata to provide novel affordances (better search, linked cross-referencing, granular citation, etc.). This is sometimes referred to as the rules being ‘machine-readable’.

At Stage 2, what is manipulated are symbolic representations of the rules and the relationships between them. This has been referred to as ‘machine-consumable’ rules, to highlight the different levels of computational tractability. Here RaC approaches aim to capture the relationships between the symbolic representations of rules to enable conclusions to be drawn from them.62 The computer does not understand the linguistic or ‘semantic’ meaning of the rules, or their import within the legal domain – only the formal relationships between their symbolic representations. From both a legal theory and socio-legal perspective this is a fundamental limitation, but this does not mean such systems cannot play a role, for example in the production of higher-quality legislation.63

3.4.2.1 Logic checking

At the basic level, logic checking can be another unglamorous addition to the legislative drafter’s toolkit, where computation augments the drafting and subsequent comprehension of the statute that already takes place. As Waddington puts it,

It can involve merely highlighting the logical structures that the drafter is trying to create in the legislation, so that any use of that logic should always be traceable, explainable and open to correction or appeal in the same way as it is when a human follows the logic from the text.64

The end product is in the same medium as traditional legislative drafting – namely natural language text-driven rules – but these are the result of a process that has used some measure of testing and checking to ensure that they meet a base level of logical coherence. The result is legislation that contains fewer mistakes, for example syntactic ambiguities that create outcomes that are impossible to arrive at within the logic of the text, or cross-references to non-existent provisions.65 Since the logic of the output is non-formal and embodied in natural language text, it can still itself be contested, along with the meaning and ascription of the predicates it contains.66

When RaC is used in this way, the formal quality of the resulting legislation is higher, because when it comes to be interpreted, the chances are reduced of encountering a condition that cannot be made sense of in the real world, without recourse to a court. Although some forms of linguistic ambiguity are inherent features of natural language, and are fundamental to the contestability that lies at the heart of the Rule Law,67 avoiding the drafting of patently irresolvable conditions in a statute can only be beneficial in terms of both legal protection and the proper reflection of the intention of the democratic legislator.68

While the courtroom is where law’s capacity to resolve such difficulties is most clearly demonstrated, litigation that arises from mistakes in legislation hardly reflects the higher aspirations of the law. Avoiding them in the first place frees up limited court capacity to focus on conflicts that are of more substantive importance to the individuals affected and to the community as a whole. This is true not just in terms of the closure provided by a specific ruling itself, but also in the deeper sense that the ongoing practice of legality is continued and upheld, and with it with democratic engagement of citizens with the norms and processes that structure and co-constitute society.69

3.4.2.2 Policy development and parallel drafting

Further along this stage of the normative spectrum, RaC can have a more structuring impact on the practices involved in developing and producing legal rules. As we saw with the definitions in section 3.2, the policy sphere is engaging with RaC as both a tool and a perspective at the interface between policymaking and legislative drafting. The OECD’s working paper ‘Cracking the Code’ refers to RaC as ‘a fundamental transformation of the rulemaking process’ and a ‘strategic and deliberate approach to rulemaking, as well as an output’.70 Waddington articulates this vision:

This could mean that legislative drafters and policy officers understand each other better during the drafting, that consultees can more easily grasp what it proposed and demonstrate how it could be changed, that inconsistencies in drafts can be spotted before they become problems, and that those who need to read the legislation can be helped to navigate complex sets of cross-references, conditions and exceptions to other exceptions. Those would represent significant benefits in themselves, without going anywhere near automating the implementation or enforcement of legislation.71

In the same vein, the New Zealand Government’s Better Rules for Government project seeks to bridge the perceived gap between policy intent and implementation, applying a service design approach to integrate policy development with rule drafting.72 It brings together teams from across the legislative process, including policy makers and analysts, legislative drafters, rule analysts, service designers and software developers. Instead of following a sequential process from policy to drafting to implementation, akin to the waterfall approach in software development, the idea is that direct and iterative (‘agile’) collaboration between each discipline will result in rules that better reflect the policy intent of the government. As the findings of the project’s Discovery Report put it:

We concluded that the initial impact of policy intent can be delivered faster, and the ability to respond to change is greater, with:

-

Multidisciplinary teams that use open standards and frameworks, share and make openly available ‘living’ knowledge assets, and work early and meaningfully with impacted people.

-

The output is machine and human consumable rules that are consistent, traceable, have equivalent reliance and are easy to manage.

-

Early drafts of machine consumable rules can be used to do scenario and user testing for meaningful and early engagement with Ministers and impacted people or systems.

-

Use of machine consumable rules by automated systems can provide feedback into the policy development system for continuous improvement.73

Other benefits include the development of standard patterns of legislative language that represent recurring policy requirements.74 These set out skeleton formulations for policy aims, and the questions that must be addressed to implement the pattern in a legislative draft. Such ‘modular’ rule formulations can then be used to develop software design patterns that can implement them, thus reducing the potential for misrepresentation of the rules after-the-fact, and the engineering problems of continually reimplementing what could otherwise be robust standardised approach.75

Once again, the ultimate product is still text-driven legislation, but the process by which that product is arrived at is improved in various ways, owing to closer collaboration and understanding between the cross-disciplinary teams that are involved in the policy-to-legislation process. Although constitutionally speaking the legislature cannot decide in advance the meaning of the rules it produces, their quality as rules might be meaningfully improved where the teams involved in producing them have an understanding of one another’s practices and the constraints they work within, so that the ‘gearbox’ between democratic policymaking and legal drafting runs more smoothly.76

3.4.2.3 Potential impact(s) of code-driven law

The potential impact at this point on the spectrum depends to a large extent on whether the application of the approach is used ex ante during the drafting of the rules, or ex post in the attempt to deliver a service, automate compliance, or provide advice about what the rules mean.

As with the structural markup discussed at Stage 1, what comes out at the end of the process are rules that are, from a formal perspective, identical to what went before. Their fundamental affordances are unchanged (whatever their content might be). The text is still natural language, affording interpretation and contestation. The procedures of the Rule of Law are in principle still available; they take up where the legislative process leaves off, ready to deal with disagreements about legal right and duty in the normal way. And, where the goals of initiatives like the New Zealand Government’s Better Rules project are in fact realised, the quality of the resulting rules is improved – they contain fewer logical anomalies, and their structural translation is more faithful to the goals of the legislature’s policy than it might otherwise have been.

3.4.2.3.1 The threshold for formalisation

Various issues arise, however, in relation to the content of the rules. First is the question of which elements of the legislation should be modelled to undergo checking for logical correctness. As the authors of the Better Rules report recognise, ‘not all rules are suitable for machine consumption’.77 This raises the question of which rules are suited to computational representation and, crucially, who gets to decide this. Just as the distinction between ‘easy’ and ‘hard’ cases is not a simple one in text-driven law, the question of what rules are readily formalizable is similarly vexed. Any rule can be formalised; the question is how it is done, what the effects are of this, how they interplay with Rule of Law values and procedures, and who is affected by the change.

Any rule can be formalised; the question is how it is done, what the effects are of this, how they interplay with Rule of Law values and procedures, and who is affected by the change.

In one of the experiments undertaken by the Better Rules project, for example, only one part of one piece of legislation was considered, in order to ‘keep the problem (reasonably) discrete’.78 While this is an understandable decision in an exploratory scoping exercise, the question of scalability is absolutely key, because the meaning of legal norms is never limited to just the text of the statute containing them but is influenced by other sources of law, including constitutional enactments, case law, doctrine and principle.79

This underlines the importance of understanding from the outset the nature of text-driven law, lest the use of artificially restricted examples give the impression that success on a small scale will be applicable to the wider law and legal system. As Leith suggests, in the legal world (to say nothing of any other domain) it is no defence to say that the intention was to formalise only one area or piece of law, because by nature the discipline of law requires more than that:

legal knowledge, the sociology of law demonstrates, cannot be partitioned off into neat blocks which will fall, one by one, to the technicalism of the AI researcher. Rather, it is only by having a global appreciation of all the aspects of law which will allow each of those aspects to be properly understood – for law is an interconnected body of practices, ideology, social attitudes and legal texts, the latter being in many ways the least important.80

Whether this limited view is a problem will depend on the context in which the RaC system is used. Policy and drafting experts using RaC in the development stage of a statute will perhaps understand the proper (limited) role that the system can play within their broader practice (in any event, RaC in those contexts is mostly aimed at producing well-formed documents rather than pronouncing on the meaning of the law). RaC systems that purport to facilitate compliance or give advice, however, might prompt people to take actions that are misinformed as to the proper extent and meaning of the law. This question of interpretation is of central importance, and we will return to it in section 3.5 below.

3.4.3 Stage 3: bespoke languages and digital-first laws

At this part of the normative spectrum, new domain-specific [programming] languages (DSLs) enable the declaration of rules in a format directly susceptible to computational processing and automated enforcement.81 DSLs can be distinguished from general-purpose programming languages such as Python or Rust because they are designed for a particular class of problem or task.82

In the contemporary RaC context, the subset of DSLs known as ‘controlled natural languages’ (CNLs) are commonly adopted.83 These make programming rules more accessible for non-technical domain experts, i.e. policymakers and lawyers. In some cases, the language design brings the ‘grammar’ and keywords of the CNL closer to that of natural language legal text, to allow it to be read and understood as one. Despite this, as their name suggests their syntax is tightly constrained so that the rules follow a strictly predefined form.

The compilers of RaC DSLs embed different approaches to the logical aspect of legal reasoning, for example allowing for exceptions to rules (and exceptions to those exceptions), prioritisation of the applicable order of rules, and the role of time in the applicability of a rule.84 The ultimate goal is basically the same: to model the logical structure of rules to produce automated conclusions that can be used for compliance checking, the application of the law by officials, and to provide advice on how the law might apply in a given situation (or, as is more likely, some mix of all three).

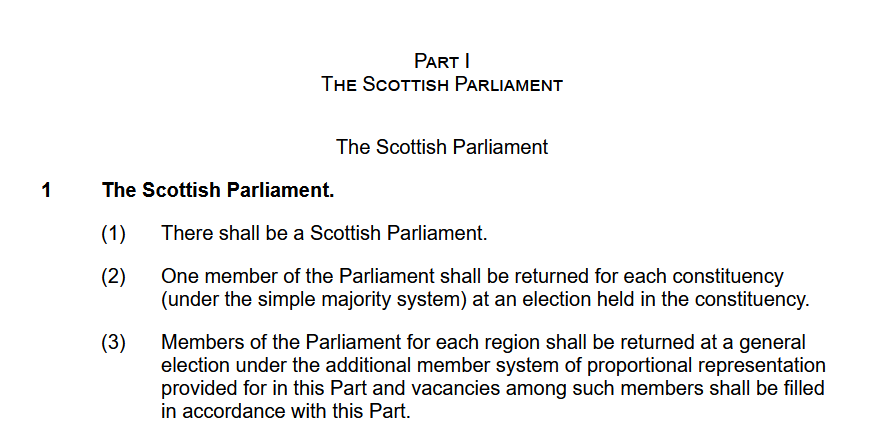

3.4.3.1 RegelSpraak: a controlled natural language

A contemporary example of a RaC CNL is RegelSpraak, used for the calculation of tax liabilities by the Dutch Tax Authority.85 RegelSpraak is based on RuleSpeak, a ‘set of guidelines for expressing business rules in concise, business-friendly fashion’.86 Like logic programming more generally, the modelling of business rules has a long history that gives an insight into the lens through which legal rules are often viewed when approached from that perspective.87 RegelSpraak imposes a strict ‘[RESULT] IF [CONDITION]’ structure on rules.88 Those conditions compare attributes that can be Boolean (true/false), numerical, date, enumerative, or that define the role/object the rule is concerned with.89 As with other formalisms, the rules are defined ‘atomically’ as discrete units, allowing for the identification of broader rule patterns. This is intended to mirror modularisation in programming, and allows for recursive inductive reasoning about the rules.90

Figure 3. A RegelSpraak rule pattern (left) and substantive rule (right)

Figure 3. A RegelSpraak rule pattern (left) and substantive rule (right)

The aims of RegelSpraak are to be intelligible to non-technical users, to allow ‘automated semantic analysis’, and to facilitate ‘automated execution of the rules’.91 The Dutch Taxation Authority use it to automate the execution of tax rules in its internal systems and on its website, to make it easier to handle the implementation of annual budget updates, and to provide a single, centralised ‘source of truth’ for fiscal rules that, despite their quasi-natural language representation, are ‘only interpretable in one way’.92 Given its intended audience, its implementation within the Dutch Taxation Authority (DTA) uses Dutch phrasing and grammatical construction to ‘maximize the resemblance to a natural sentence’.93

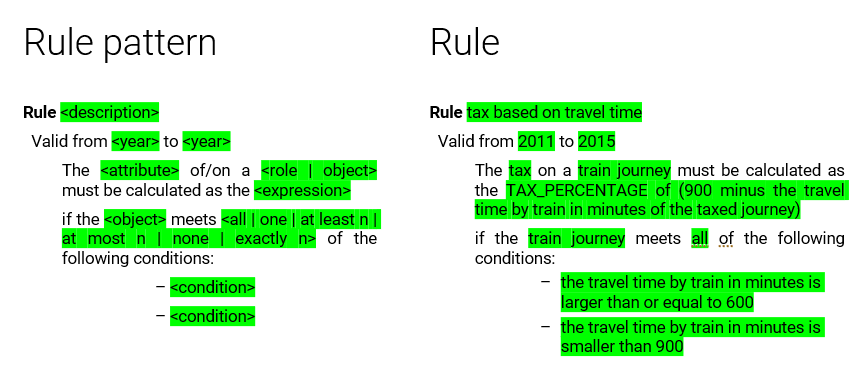

3.4.3.2 Catala: a domain-specific language

Another prominent example of a DSL used in RaC is Catala, a language originally designed for use in applying French fiscal law.94 It adapts the ‘literate programming’ approach to code documentation by putting the executable RaC code immediately adjacent to the legal text which it seeks to translate.95

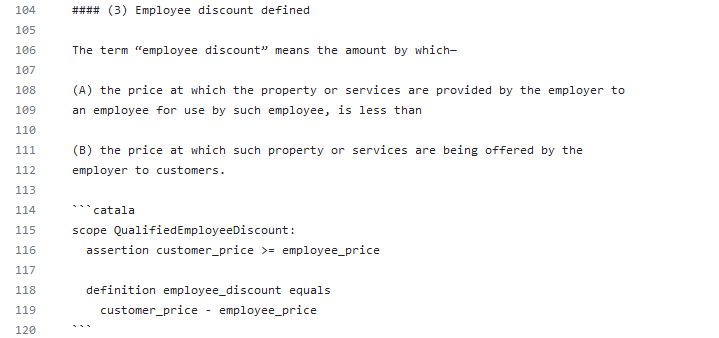

Figure 4 Part of the US federal tax code, formalised in Catala96

Figure 4 Part of the US federal tax code, formalised in Catala96

Here, literate programming is not (just) intended to aid understanding of the code ex post, but is also integral to the ‘pair programming’ approach used in writing that code. There, an expert in Catala sits alongside an expert in the relevant fiscal law. Again, the cross-disciplinary approach to policy development discussed above can be facilitated: expertise in policy, programming and legal interpretation can be mutually beneficial for the end product, the legal or policy expert interpreting the legal norms and checking the programmer’s implementation in a cycle of iterative testing and refinement.97

The use of a DSL adds an extra layer to the RaC-enhanced policy development process discussed above at Stage 2, by providing a formalisation into which the text of the legal norms can be directly translated. The result is a text that is bi-directional; it is both a natural language text, intelligible by humans without a technical background, and a formalisation that can be compiled and executed by the machine.98 Where the compiler of the DSL is designed around formal verification principles, as Catala is, one can be certain that the compiled code reproduces the logic of the rules expressed in the DSL.99

This builds on the logic checking capacity of logic programming to facilitate the general-purpose code that can be used in production systems. The translation of the logical structure of the legislation into a medium that can be (i) checked computationally, and (ii) understood by a non-technical reader, means that the latter – usually a legal expert – can verify that the output of the code is congruent with how they expect the legal rules to operate.

3.4.3.3 A ‘single source of truth’

The compiler of the DSL converts the intelligible text of the DSL model into a form that allows the modelled logic to be computed just like any other programme. Some languages, such as Catala, are designed to allow trans-compilation of that logic into general-purpose programming languages. The resulting code can then be integrated directly into user-facing applications.100 To the extent that the application is required to comply with a specific law, or purports to enforce or give advice on what the law means, it can be said to be faithful to the model of the law that was written in the DSL.

The goal here is to create a ‘single source of truth’:101 a quasi-natural language version of the law that is endorsed by legal experts as a canonical translation of the text-driven law that it models. This is somewhat analogous to the structured versions of legislative documents we saw at Stage 1 of the normative spectrum. Like those structured documents, the initial DSL translation is generative, in that it can be used as a source from which to produce multiple further versions for use in different contexts. The significant difference at this point on the spectrum, however, is that what can be done with those versions is potentially more impactful because they are computable, automatable, and because they can be directly integrated into infrastructural or application code that will operate automatically in the real world. The threshold between technological normativity and legal normativity is thus quite different from RaC approaches at Stage 1 on the spectrum.

3.4.3.4 Digital-first laws

In the approaches just outlined, the goal is to reduce the friction of translation as far as possible, so that those involved in developing policy and the natural language rules that implement it can also be directly involved in the process of producing RaC translations for use in digital service delivery, compliance, or legal advice and knowledge management.

Going further, there is a push towards various visions of ‘digital-first’ drafting, including the direct use of DSLs for legal rules.102 Policymaking is thus oriented around simplifying rules and disambiguating terms, in order to reduce discretion and facilitate automated processing.103 Here the digital version is treated as the ‘single source of truth’. The law is expressed directly in – rather than translated into – an executable (and presumably still human-readable) DSL, and thus compliance is essentially guaranteed, provided the rules are then successfully integrated into the target systems. Friction between articulating a rule and it being implemented in a digital system is reduced as far as possible and, in an inversion of what was discussed above, natural language versions of the rules are generated from the DSL.

Painting what is probably an extreme picture, Wong envisages that at this point legislative drafters would consider the digital RaC version to be authoritative, while the public would interpret and use the natural language version that is generated from the latter. Taken further still, the digital version comes to be treated as the official source of law both inside the administration and by the public.104 Both legislative and contractual norms are digital-by-default; just as digital audio, video and image formats have become the default means of representing previously ‘analogue’ media (music, film, imagery, etc.), so too can the law have its essential substance represented digitally.105 If the legislature was to get to this point, where law is represented directly in code, the layers and steps of the legislative process would be dramatically reduced, and the code-driven rules would presumably have the imprimatur of constitutional validity.106 We would have Rules as Code as Law.

3.4.3.5 Potential impact(s) of code-driven law

At this point on the normative spectrum, we are starting to depart from legal normativity per se. Constrained natural languages and domain-specific languages that are human-readable but machine-executable limit what can be validly expressed in them, which can have a shaping effect on the content of policy from the outset.

Even if the scope of policy is left unchanged (assuming that is possible in the shift from text to code – see the discussion at section 3.5.3 below), and all that is aimed for is a faithful or ‘isomorphic’ representation of the logic of the statute, this shaping effect remains because of the way technological normativity operates in contrast to text-driven normativity.107 At the point of drafting natural language rules, logic modelling can be helpful, as we have seen above, but at the point of execution or the provision of advice it necessarily elides large parts of what it means to interpret and apply a legal rule. For that reason, ‘isomorphism’ as a goal is fundamentally limited in ways that circumscribe its usefulness for real-world application of legal rules. Even a robust, formally-verified isomorphism must not be confused with the law itself, since rules, however high-quality they might be, are not the whole of the law, even when they are text-driven.

Articulating laws directly into code for the purposes of execution shifts us closer to computational legalism.

If rules are written directly into code, the effect is stronger still. In the latter case the disconnect between legal and technological normativity is complete: even if a natural language text is generated from the code and treated as notionally ‘authoritative’,108 in practice the normative divergence would mean those subject to the automated execution of the rules would be interpreting a vision of normativity (textual/legal) that is categorically different from what was being imposed in reality (i.e. technological). This would be deeply problematic in terms of the Rule of Law; the executive and the public would not be ‘reading from the same hymn sheet’, normatively speaking, which at the very least would undermine the Rule of Law principle of equality, and the affordance of procedural due process. Those with access to the code would have a different view of what the law is from those with access only to the natural language generated from it. The democratic affordances of textual interpretation would be sidelined, and power would accrue to those with access to the code and hence prior knowledge of the distinct technological normativity it will impose.

Articulating laws directly into code for the purposes of execution shifts us closer to computational legalism.109 It sidesteps the affordances of text-driven law, because automation is precisely the goal, eliding the direct and indirect values of those affordances. The relationship between legal and technological normativity shifts dramatically away from an equilibrium between rules posited ex ante and interpretation and procedure happening ex post. Rules become the central focus, and at the same time because those rules are computational rather than simply textual, imposing technological rather than legal normativity, their capacity to impose themselves is far stronger and their constitutional acceptability is accordingly much weaker.110

3.5 Anticipating legal protection under code-driven law

Above we set out a spectrum of the potential impacts of Rules as Code on law, and discussed some of the potential benefits and concerns that arise at the various points where RaC systems lie on that spectrum. In this section, we step back from individual systems and approaches to highlight some concerns about RaC more generally: who will benefit from its introduction? How might its maintenance requirements create additional burdens for the Rule of Law? How will it impact the concepts of interpretation and legal effect that are fundamental to legal protection.?

3.5.1 Who benefits?

A fundamental question that must be answered with respect to each RaC application is: who benefits? While the intention is generally to improve access to justice, reduce cost, and/or more efficiently convert policy into legal rules, it will take some time to tell whether these laudable goals have been realised. Whatever the goal, however, it remains the case that formalisation means adopting a certain view of the world. At least when used to execute the law, or to advise on its meaning, it imposes a frame that in a plural society it should be the law’s role to keep open. As Schafer puts it,

Legal AI becomes the stalking horse of a very specific conception of justice, turning what should be a contested public debate about the values of law into a technocratic decision of what is computationally possible.111

For all its faults in practice – some of which can indeed be ameliorated by RaC – text-driven law is fundamentally democratic, insofar as natural language normativity affords both accessibility and the co-existence of a multitude of differing worldviews. The systems and procedures built around the technology of text might be flawed and in need of (in some cases serious) reform, but that is not a consequence of text as the central medium of law.

Formalisation requires adopting a certain view of the world… it imposes a frame that in a plural society it should be the law’s role to keep open.

Regarding access to justice, we suggested above that certain RaC approaches, such as structured documents, can materially enhance access to the text of the law, and provide affordances that are genuinely valuable for interpreting legal meaning and legal status. But other RaC systems threaten to create a two-tier justice system, where those without power and the means to access bespoke legal advice must instead make do with commoditised output of a formalism. Those people will in many cases be in vulnerable positions and, given the normativity of the computational medium, might not appreciate how to manipulate the law to fit their needs or understand what their options are for contestation.112 The risk is therefore that existing problems of access to justice are potentially amplified rather than solved, with focus shifted away from potentially more effective measures such as increasing investment in legal aid (more costly than RaC and therefore less attractive to some though this is likely to be).

Another consideration is how we conceive of and value lawyers’ skills. A great deal of what lawyers actually do lies outside the interpretation of rules.113 By focusing on rules and their automation, we potentially deskill lawyers and reduce the extent of their role as skilled interpreters of those rules in light of their clients’ circumstances, and those circumstances in light of the rules.114 A commodification of expertise might remove lawyerly practices that are societally valuable, both in terms of providing high-quality legal advice that successfully upholds clients’ legal interests, but also in terms of support, understanding, and solidarity, which in many cases will be of great value regardless of the outcome of the case.

Questions of user need might in fact be better answered in part by design thinking

At the same time, it is possible that automation might instead free up time for those elements of the role. Understanding the true impact will require further (empirical) research.115 What is necessary, however, is a close(r) understanding of what conditions legal protection and the Rule of Law rely upon for their operation, and thus what theoretical and technological tools practitioners need at their disposal. Indeed, such questions of user need might in fact be better answered in part by the ‘design thinking’ approaches pioneered by the New Zealand Government’s Better Rules project.116 To that extent they might be welcomed as a means of getting to the heart of how those who ‘do’ law can be better understood and supported by technology to uphold its central values.

3.5.2 Increased complexity and maintenance

Viewed through the lens of the Rule of Law, with its dynamically interconnecting parts, it is conceivable that rather than reducing complexity some RaC approaches will produce or even require more of it to work. There may be ripple effects in the legal system, depending on how RaC is adopted – particularly if its outputs are treated as having legal effect.117

For example, procedure and due process might need to be adapted to account for the speed of RaC outputs. Translations in one part of the system might necessitate a cascade of translations in other parts, if we are to avoid the complications of attempting to combine or interface legal and technological normativity in or around the same subject matter.118 Areas of law that might hitherto have been thought to be inappropriate for formalisation might come to require translation, for example interpretative and procedural provisions, in order to support those areas whose formalisation is thought to be uncontroversial. Alternatively, if they are still thought to be resistant to translation, a kind of parallel set of equivalent code-driven rules might need to be developed, just to support the code-driven parts of the law. Interfaces will be required to connect the code-driven body of law with the text-driven, particularly where the latter is deemed authoritative.119 The potential complexity of the interplay between textual rules and code-driven rules is something that will need to be properly anticipated.

The potential complexity of the interplay between textual rules and code-driven rules is something that will need to be anticipated.

Another area of potential complexity is the maintenance of the rules: will they be kept up to date and, if so, by whom? While we generally accept that officially published legislation is often not kept perfectly current, as we have repeatedly seen technological normativity is quite different in its capacity to impose itself, and so the problem of inaccurate or out of date rules becomes hugely salient. RaC interpretations are at risk of failing to adequately reflect (i) the state of the rules, for example following legislative amendments or repeals, and (ii) the interpretations of those rules, either by the courts or in light of other instruments that have a bearing on their meaning (such as fundamental rights law). Legally invalid outputs produced from such RaC interpretations might be readily relied upon simply because of the medium that delivers and imposes them. If text-driven norms are ‘always speaking’, subject to purposive interpretation that allows for adaptation to meet new or unforeseen circumstances, the potential risk with inaccurate RaC translations is that they are ‘never listening’. Reliance on such translations, which might be inadvertent if they are automatically imposed or appear to have authoritative status, could have significant consequences.

3.5.3 Interpretative authority

There are significant normative implications inherent in decisions made about (i) what gets formalised, and in what ways, (ii) who makes that choice and under what authority, (iii) where the resulting system will be deployed and for what purposes, and (iv) which citizens and legal subjects it is aimed at or may come to be subject to its output.120

Where a RaC deployment purports to enforce the law or provide advice as to what it means, it is not sufficient to rely on the coders of RaC translations to ensure there is a ‘human-in-the-loop’.121 This upends the proper constitutional order: not only do those who write the rules get to decide on their interpretation and the logic of their imposition, they also decide on the extent and the nature of any ‘escape hatches’ for when something goes wrong.

This places too much power in the hands of those creating the rules, undermining the separation of powers and the role of the court in providing authoritative interpretations of the meaning of rules in particular cases (in the knowledge and with the foresight that those interpretations will have salience in future analogous cases). As Bennion puts it,

It is the function of the court alone authoritatively to declare the legal meaning of an enactment. If anyone else, such as its drafter or the politician promoting it, purports to lay down what the legal meaning is the court may react adversely, regarding this as an encroachment on its constitutional sphere.122

It also obscures the responsibility for ascribing or attributing a particular meaning to the rule in a particular case, since any such case has been reduced in advance to a set of abstract variables that it is assumed can exhaust all the salient aspects in any future context where the rule ought to apply. This elides the justificatory element that ought to be inherent in any application of a legal rule, including the responsibility to provide reasons for the conclusion that was reached – essential aspects of the legitimate enforcement of rules.123 Instead, a significant chunk of the rule-application reasoning is front-loaded, in the belief it is deductively universal and therefore not part of the open texture of the law.124 Compliance is foretold; legal subjects are objects of control, rather than agents who get to choose to comply, and how. Engagement with the community might be stunted if we no longer must actively interpret the relevance and meaning of the rules within a given context.125

3.5.3.1 The mirage of human-readable code

As mentioned above, the use of quasi-natural language runs the risk that the body of rules that is developed gets shifted to suit what can be represented in the DSL, even within the policy development process. The fact that it looks much like natural language heightens this risk: some might believe that because it looks like natural language anything can be formalised in it, or, conversely, that anything that cannot be formalised in it is not worth including in the law. This is a framing effect that over time risks limiting the scope of substantive legal protection. It raises the question of whether RaC rules should in fact avoid being human readable.

In the context of applying the rules, given that the automated execution of digitised rules is of a nature categorically different from how a textual rule is ‘executed’, making RaC interpretations look as close to natural language rules as possible might in fact mislead as to their nature.126 The risk of blurring the line between legal normativity and technological normativity might imply that digital representations of the law, insofar as they are directed at application by and to citizens, should actively seek to avoid appearing too similar to natural language rules.

How will judges fulfil their constitutional role in a text-driven way, when the original norm is code-driven?

This ambiguity is deepened when we consider how judges should respond to gaps in digital-first RaC ‘law’.127 How would they fulfil their constitutional function in a text-driven way, when the original norm is code-driven? If judges produce a natural language judgment about a space left in a RaC translation, does that then need to be converted into additional code and added to the RaC implementation? Would the separation of powers require that the judges produce the code themselves? Or will they write orthodox (i.e. textual) legal orders that require RaC coders to amend the digital translation? In that case, which seems most plausible, we come back round to the problem of interpretative authority – the constitutionally-empowered court decides, but it is the RaC coder, probably within the executive, who interprets that judgment and implements it in their system. We might end up in an infinite regress, with no acceptable closure, since the type of normativity the court can produce is categorically different from that which will be implemented in practice.

RaC systems should never be given legal effect, assuming we still wish the courts to have a text-driven adjudicative role.

This emphasises the point that RaC systems should never be given legal effect, assuming we still wish the courts to have a text-driven adjudicative role. To do so would introduce logical contortions like the one just described. It would undermine the fabric of the law and the way that legal rules fit into, and reflexively constitute, the complex web of interrelated practices that are oriented towards legal protection and the Rule of Law.

It might be that RaC formalisms should therefore emphasise their technological character, rather than seeking to ape natural language, in order to highlight that they are merely tools of implementation, rather than canonical sources of law. The difference between the two forms of normativity must at all times be clear.

3.5.3.2 Technological normativity and interpretation

Legislative drafters are enjoined to write laws that respect and uphold the Rule of Law; in seeking to produce an ‘internally coherent conceptual scheme’ of rules, they serve ‘core rules of law values of legal certainty, predictability, formal justice and equality.’128 As we saw above, law cannot be split into discrete self-contained parts, and by the same token drafting requires a sensitivity to the broader legal domain within which a new piece of legislation will sit, adapting terminology and conceptual structure to ensure coherence with what has gone before.

If the drafter fails to do this, or does it badly, the problem could be solved through interpretation.129 While legal reasoning can ultimately be presented as syllogistic logic, that comes only at the point of justification of an argument, after various interpretative hurdles have been passed and attributions made (some of which might result from arguments about what that logic itself ought to be), and not before.130 The gap between interpreting a text rule and following through on its implications means latent incoherences in the text can be identified, ignored or if necessary contested. This inherent passivity is central to the nature of text-driven law and the spaces it affords for considered action.131

Code, however, is different. Its execution is effectively immediate, and clear-edged.132 When used for enforcement or advice, the output is the output, echoing the legalist idea that ‘the law is the law’.133 If the coded model fails to integrate properly with models of other legislation that are relevant to it, it will simply fail to execute as expected – perhaps without anyone being aware of that fact. Depending on the extent to which the output is treated as having legal effect, the consequences of this type of normativity will vary. As Barraclough, Fraser and Barnes emphasise, RaC representations ought therefore to be thought of not as translations of the law, but instead as individual interpretations of it.134 This highlights that any given RaC model is not the law per se but is just one interpretation of what the law says and means.135

But the role played by technological normativity creates a crucial difference between code-driven interpretations and other non-authoritative (i.e. non-judicial) interpretations. People, including lawyers, interpret the law all the time, and often they will get it wrong. But the difference with code-embedded interpretations and the systems that execute them is that the ‘user experience’ of the citizen is quite different: the embeddedness of the interpretation tends away from the capacity of those affected by it to question its validity or applicability. Depending on whether and how the Rac translation’s (lack of) authority is communicated, they might be misled into accepting the output as accurate or binding. In such situations the problem lies as much in the design of the application as it does in the specific formalism that is used. As Le Sueur suggests, the application in a very real sense becomes part of the law:

we should treat ‘the app’ (the computer programs that will produce individual decisions) as ‘the law’. It is this app, not the text of legislation, that will regulate the legal relationship between citizen and state in automated decision-making. Apps should, like other forms of legislation, be brought under democratic control.136